TL;DR

AI coding tools in 2026 look crowded from the outside and narrower from the inside. Frontier models cluster tightly on the benchmarks that get published, and the gap that actually matters — the one between what an IDE agent does in a demo and what it does on your production repository — is not well measured yet. This guide summarises the benchmark evidence, names where it is reliable, and flags where vendor claims outrun the data.

Key Takeaways

- SWE-bench Verified, the cleaned-up standard benchmark for repository-level tasks, shows frontier coding systems clustering in the 60–75 percent solve-rate band as of early 2026, with the top scores held by closed models and a narrow gap to the strongest open-weights entries.

- METR's July 2025 randomized controlled study found experienced open-source developers using AI tools took 19 percent longer than without them while believing they were 20 percent faster — the most robust productivity finding in the current literature.

- Aider's public polyglot leaderboard and the Chatbot Arena Coding board both track model-level strength on coding tasks, but neither measures the agent wrapper, tool access, or retrieval layer that produce most of the real-world variance.

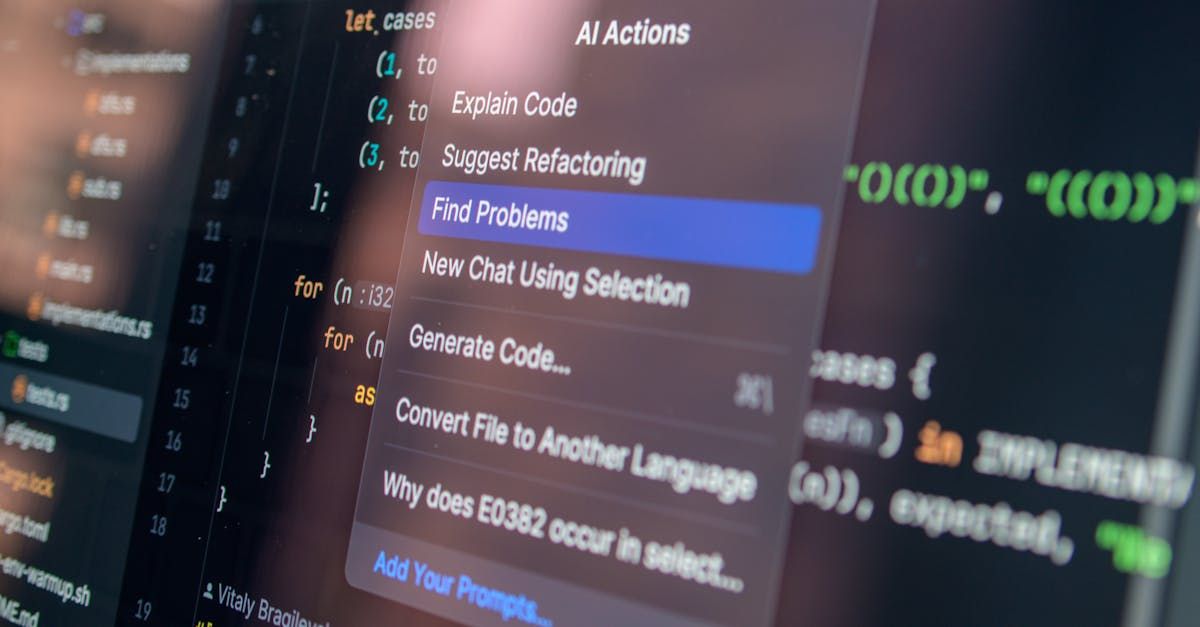

- GitHub Copilot, Cursor, Claude Code, Windsurf, Cline, Aider, and Continue are the meaningful tools that shipped into 2026 — each uses a different combination of model, context strategy, and tool access, and "best tool" is now a question about integration, not raw capability.

- Model Context Protocol (MCP), shipped by Anthropic in late 2024, is now the de-facto standard for how coding agents talk to tools, repositories, and documentation — the ecosystem effect on tool choice is larger than any single model upgrade in the same window.

- The evaluation-to-production gap — between clean benchmark tasks and messy multi-service work — remains the most underweighted variable in vendor messaging, and teams measuring their own workflows see that gap as the dominant factor in whether a tool earns its cost.

What the benchmarks actually measure

Let me walk you through the evidence layer by layer, because the tool-picking decision hinges on which benchmark you treat as load-bearing.

SWE-bench Verified is the benchmark that changed how we talk about coding agents. Maintained with OpenAI, it cleans up the original SWE-bench task set to remove ambiguous or untestable issues and uses real GitHub issue-to-PR pairs from popular Python repositories. It measures whether an agent can read an issue, navigate a codebase, and produce a patch that passes the repo's tests. As of early 2026, frontier models cluster in the 60–75 percent solve-rate band on this benchmark, with closed models at the top and strong open-weights entries within a handful of points. The gap between the top model and the fifth model is small — a few percentage points.

HumanEval and MBPP measure function-level code synthesis from a docstring. Saturating these is easy now — most frontier models report 90+ percent pass@1. These numbers are not load-bearing for tool selection in 2026 because the task is too narrow; they tell you almost nothing about agent behaviour on a 200k-line repo.

Aider's polyglot leaderboard tests edit-and-apply workflows across multiple languages. It is more useful than HumanEval for picking a pair-programming tool because it measures whether the model produces a diff that actually applies cleanly. Rankings on this leaderboard shift month to month as models get tuned for tool-call precision.

Chatbot Arena Coding is crowd-sourced preference, which is a different thing than a solve-rate benchmark. It captures what developers subjectively prefer in short interactions and tends to be sticky — preference lags capability by roughly one model generation.

METR's productivity study, published in July 2025, is the one piece of evidence I would not let a team skip. In a randomized controlled trial of sixteen experienced open-source developers working on their own repositories, tasks took 19 percent longer with AI tools than without. The developers estimated they were 20 percent faster. That 39-point perception-versus-measurement gap is the single most important signal in the current literature, and it does not show up on any coding leaderboard.

| Tool | Primary interaction | Model surface | Strongest fit |

|---|

| GitHub Copilot | Inline completion + chat | Multiple models, gated by tier | Existing GitHub-heavy teams |

| Cursor | Full IDE with agent mode | Model-selectable; Claude and GPT defaults | Teams wanting a coding-first IDE |

| Claude Code | CLI-native agent with MCP | Claude Sonnet/Opus | Terminal-centric senior developers |

| Windsurf | IDE with long-horizon agent | Model-selectable | Agent-driven refactors |

| Cline | VS Code agent extension | BYO model | Teams with on-prem or custom-model needs |

| Aider | Terminal pair-programmer | BYO model, repo-map aware | Solo developers wanting surgical diffs |

| Continue | Open-source IDE extension | Fully BYO | Teams needing customisation and auditability |

| Codex CLI | OpenAI's terminal agent | OpenAI models | OpenAI-stack teams |

The useful way to read this table is by surface, not by model. Every tool in it can run a frontier model. What separates them is how they package context, how they expose tools, and how they handle failure. The selection question has moved from "which model" to "which wrapper plus which model plus which integration surface."

Benchmark performance and production performance diverge in predictable places, and naming them explicitly is the most useful thing a buying team can do.

Long-range context. SWE-bench tasks are scoped to specific repositories with specific issues. Production repositories have cross-service dependencies, undocumented conventions, and historical decisions that are not in the code. Tools with strong repository-map tooling — Aider's repo-map, Cursor's index, Claude Code's MCP-based traversal — outperform raw model strength on these tasks. This is a context-engineering win, not a capability win.

Tool-call precision. Multi-step agent workflows are bottlenecked by the weakest tool-call in the chain. A single mis-formatted shell argument ends the run. Published benchmarks rarely report tail behaviour. Teams running their own eval suites consistently find that tool-call error rates dominate the variance in agent completion, not reasoning quality.

Diff-apply fidelity. A model that writes correct code in chat but produces a diff that fails to apply is, in production terms, worse than a model that writes slightly less ambitious code with clean diffs. Aider's leaderboard surfaces this dimension cleanly; most others hide it.

Latency against thinking time. Longer thinking budgets raise solve rates on published benchmarks and lower them in practice, because developers context-switch during long-running agent operations. The trade-off is not visible on a leaderboard. It shows up in time-to-merge metrics the benchmarks do not try to measure.

Reading the open-weights story honestly

Open-weights coding models closed most of the gap to frontier closed models during 2025. GLM-5 and GLM-5.1 from Zhipu, Qwen's Coder releases, and the DeepSeek line all landed within single-digit percentage points of the leading closed models on SWE-bench Verified. This shift matters for cost structure and for teams with data-sovereignty constraints — running a strong open-weights coding agent on your own infrastructure is now a defensible engineering choice, not a compromise.

What has not closed is the tooling gap. The IDE and agent surfaces that ship with frontier closed-model defaults are further ahead than the open-weights models they would drop into. Self-hosting a strong coding model still means building or wiring the agent layer yourself. Tools like Cline and Continue exist specifically to close this gap and are worth evaluating alongside the hosted options for any team that cares about where inference runs.

Where this is heading

The leaderboard gap will keep compressing; the integration gap will not. Frontier model performance on clean coding benchmarks is saturating at an accelerating rate. The variation left to harvest is almost entirely in the agent layer, the context strategy, and the tool surface. Expect more meaningful product differentiation from how tools read codebases, run tests, and handle failures than from which underlying model they wrap.

MCP will keep pulling the ecosystem toward interoperability. The Model Context Protocol ecosystem matured through 2025 and is now the common integration layer for a growing list of tools. The practical effect is that the cost of switching between coding tools is falling — portable context servers mean a team's investment in integrations survives a tool change. That reshapes how to reason about vendor lock-in.

Evaluation will get harder, not easier. As models saturate existing benchmarks, the useful evaluation work moves toward longer-horizon, multi-repository, multi-service tasks. Expect METR-style controlled productivity studies and private-repo evals to carry more weight than public leaderboards in 2026–2027. Vendor-supplied evals will remain an input; self-run evals will become the deciding input for larger teams.

Specialised coding agents will continue to outperform horizontal ones on specialised tasks. Security review, migration work, and large-scale refactors are increasingly served by vertical agents that bake domain assumptions into their pipelines. The horizontal IDE-agent surface will stay the default for general coding; specialised surfaces will keep eating narrow, high-value tasks.

FAQ

No benchmark answers this directly, because the useful answer depends on stack, repository size, language mix, and whether terminal-first or IDE-first workflows fit the team. SWE-bench Verified narrows the model question. Aider's leaderboard narrows the diff-apply question. METR's study narrows the productivity-claim question. Combine them.

How seriously should I take the METR 19 percent slowdown?

Seriously as a directional finding; less seriously as a universal number. The study is small and task-specific, but the direction has been echoed in informal evaluations at Google and Anthropic. The honest interpretation: assume your personal productivity gain is smaller than your perception of it, and measure your own team before quoting a vendor's number.

Is the open-weights story real, or is it benchmark gaming?

Real, with caveats. Open-weights coding models have closed most of the gap on clean benchmarks. The remaining gap is in tooling and in long-horizon agent work. If your team can invest in the agent layer, open-weights is viable now in a way it was not in 2024. If not, hosted closed-model tools still dominate out-of-the-box experience.

Does model choice still matter if the agent wrapper is strong?

Yes, but less than vendor marketing implies. A strong wrapper can narrow the gap between a great model and a decent one by 5–10 points on production tasks. A weak wrapper can waste a great model entirely. Wrapper and context strategy matter more in 2026 than in any prior year.

Pick now, but pick for wrapper quality and exit cost. Models will keep improving. Wrapping, integration, and MCP-ecosystem support will be stickier choices. Avoid tools that lock context into proprietary formats; prefer ones that let you take your repo map and integration wiring with you.

What is the single most useful thing to measure on my own team?

Time-to-merge on agent-assisted PRs versus comparable non-assisted PRs, with an explicit tag for which tool was used. Track it for one full sprint minimum. Aggregate productivity claims hide the variance that actually decides whether a tool is worth its licence.