ChatGPT vs DeepSeek: Which Free AI for Beginners is Smarter?

ChatGPT and DeepSeek are two leading free AIs for beginners. This guide compares their features, writing skills, and ease of use to help you choose.

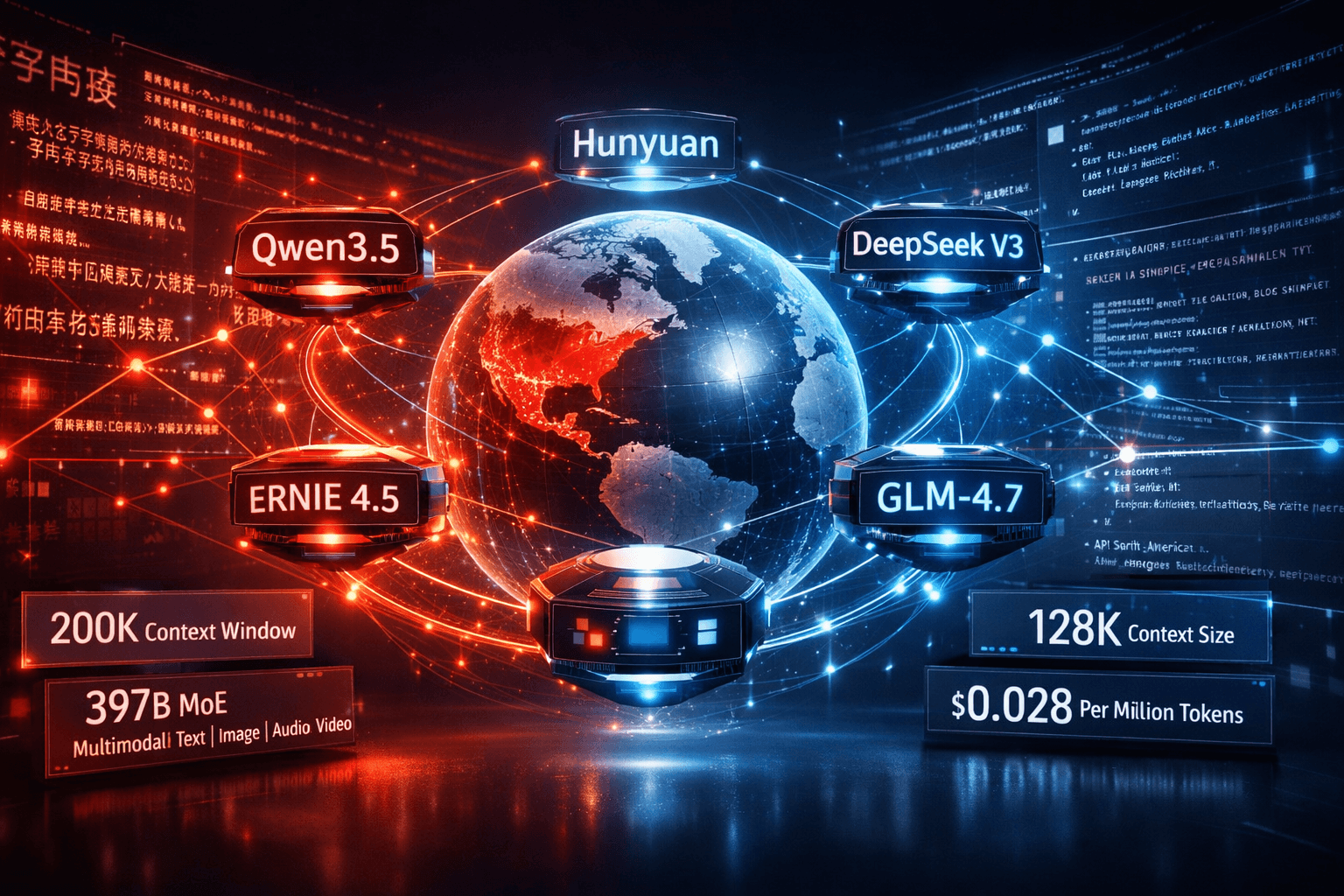

TL;DR: China's major LLMs — Qwen3.5, DeepSeek V3, Baidu ERNIE 4.5, Tencent Hunyuan, and Zhipu GLM-4.7 — have closed the benchmark gap with OpenAI and Anthropic. For bilingual Chinese–English workflows, the decision turns on deployment model, context window, and how much Chinese-language quality matters relative to your English output requirements.

In January 2025, DeepSeek R1 scored 96.3% on AIME 2024. OpenAI o1 scored 79.2%. [Confirmed] That result forced a broad reassessment. China LLMs 2026 are not a category to monitor at a distance — they are production-viable today for teams with bilingual workflows.

Since DeepSeek R1's release, Alibaba, Baidu, Tencent, and Zhipu AI have each published open-weight models that compete directly with GPT-4o and Claude Sonnet on standard benchmarks. For teams evaluating [Internal link: /category/ai-chatbots-llm-apis | AI chatbots and LLM APIs], the question has shifted. It is no longer "are these models good enough?" It is: which one fits your deployment constraints, your language balance, and your cost structure?

This post covers five models available as of February 2026. All handle Chinese and English. The differences between them matter more than the similarities.

Qwen3.5 (Alibaba, February 2026)

Released February 16, 2026, Qwen3.5 is a 397B-parameter Mixture-of-Experts model that activates only 17B parameters per inference step. [Confirmed] It supports 201 languages — up from 82 in the Qwen2.5 generation — and its vocabulary has grown to 250K tokens, up from 150K. The model is open source under a license permitting commercial use. API access runs through Alibaba Cloud Model Studio.

The vocabulary growth has practical consequences for bilingual teams. More Chinese characters map to single tokens, which reduces token count and cost for Chinese-heavy workloads compared to Western-first tokenizers. This is not a marginal difference at enterprise request volumes.

DeepSeek V3 / V3.2 (DeepSeek, 2025)

DeepSeek V3.2-Exp reduced API input pricing to $0.028 per million tokens. [Confirmed] The model uses a MoE architecture with a 128K context window and is available as open weights under the MIT license. DeepSeek R1 is the reasoning-specialized variant, optimized for math and multi-step chains; V3 is faster and cheaper for standard generation, summarization, and translation.

Cost-to-performance ratio is DeepSeek's primary claim. For [Internal link: /category/enterprise-llm | enterprise LLM] deployments handling millions of daily requests, a 140× pricing gap against o1 is not a footnote — it restructures the budget entirely. This is where DeepSeek wins, not on peak capability.

Baidu ERNIE 4.5 (Baidu, 2025)

ERNIE 4.5 became open source (Apache 2.0) on June 30, 2025. [Confirmed] The flagship 300B-A47B variant is natively multimodal — it processes text, image, audio, and video within one model. It deploys via Baidu AI Cloud's Qianfan platform and is compatible with the openai-python SDK. Baidu claims ERNIE 4.5 outperforms GPT-4.5 on multiple benchmarks. [Likely — based on Baidu's own evaluations; independent third-party validation was incomplete as of early 2026.]

[Internal link: /tool/baidu-ernie | Baidu ERNIE] performs best on tasks tied to its training corpus: formal Chinese documents, search-style queries, and customer service. English output is functional. It is not where the model excels.

Tencent Hunyuan (Tencent, ongoing)

Hunyuan is Tencent's enterprise model, available via Tencent Cloud API. Open-source variants (0.5B to 7B+) are available on GitHub and Hugging Face. The enterprise-grade model is API-only. Hunyuan supports 30+ languages and integrates with Tencent's LLM+RAG and multi-agent frameworks natively. [Confirmed] Tencent has also released Hunyuan-T1, a reasoning-focused variant positioned against DeepSeek R1. [Confirmed]

Outside Tencent's ecosystem, [Internal link: /tool/hunyuan | Hunyuan] has fewer practical advantages over Qwen or DeepSeek. If you are not building on WeCom, Tencent Meeting, or Tencent Cloud infrastructure, the integration value disappears and the open-source documentation is thinner than the alternatives.

Zhipu GLM-4.7 (Zhipu AI, December 2025)

GLM-4.7 carries 358B parameters, a 200K token context window, and an MIT license. [Confirmed] On LiveCodeBench, it scored 84.9% — ahead of Claude Sonnet 4.5. On SWE-bench Verified, 73.8% — the highest among open-source models at release. AIME 2025 performance reached 95.7%. [Confirmed]

[Internal link: /tool/glm | GLM-4.7] is underestimated outside China. The 200K context window makes it the practical choice among [Internal link: /category/open-weights-models | open-weights models] for workloads involving long documents — legal contracts, research reports, full codebases. GLM-5 is anticipated as the next release under the same open-weights strategy.

All five models handle Chinese–English code-switching in instructions. The real differences emerge in three areas.

Token efficiency. Models trained on large Chinese corpora tokenize Chinese text more efficiently. Qwen3.5's 250K vocabulary is the most direct example: more characters map to single tokens. With a Western-first tokenizer (as used in GPT-4o), a 1,000-character Chinese document might consume 500–700 tokens. With Qwen3.5, that estimate falls to 350–400. [Estimated — based on tokenizer architecture patterns; no direct measurement performed here.] At high volumes, this gap changes API cost projections materially.

Instruction following in mixed-language contexts. GLM-4.7 and Qwen3.5 perform best when instructions arrive in one language and output is required in another. DeepSeek V3 performs strongest on code-switching within technical and code tasks. ERNIE 4.5 is the most reliable for Chinese-only formal output — it does not always maintain output language consistency when switching mid-session.

English creative quality. This is where the gap with GPT-4o and Claude 3.7 remains. Long-form English narrative, marketing copy, and culturally specific phrasing — Western models still lead here. [Likely — consistent across practitioner reports as of early 2026, though the gap narrowed compared to 2024.] If English prose quality is the primary output requirement, these China LLMs 2026 are not the first choice.

| Model | Open Weights | License | Context Window | API Provider |

|---|---|---|---|---|

| Qwen3.5 | Yes | Commercial-friendly | 128K | Alibaba Cloud |

| DeepSeek V3 | Yes | MIT | 128K | DeepSeek API |

| ERNIE 4.5 (300B) | Yes | Apache 2.0 | 128K [Estimated] | Baidu Qianfan |

| Hunyuan (enterprise) | Partial — small variants only | Custom | 128K [Estimated] | Tencent Cloud |

| GLM-4.7 | Yes | MIT | 200K | Fireworks / Novita |

For air-gapped deployments — common in finance, government, and regulated healthcare — Qwen3.5, DeepSeek V3, and GLM-4.7 are the strongest options. All three support standard inference frameworks: vLLM, TensorRT-LLM, and llama.cpp for quantized smaller variants. [Confirmed for Hunyuan's serving stack via NVIDIA GTC 2025 documentation.]

Hunyuan's open-source smaller variants carry a custom license — review it before any commercial derivative use. GLM-4.7 and DeepSeek V3 under MIT are the most permissive for derivative work. ERNIE 4.5's Apache 2.0 is safe for commercial derivatives, with standard attribution requirements.

Checklist before selecting a model:

Best for... guide:

| Use Case | Best Model | Why |

|---|---|---|

| Bilingual technical documentation | Qwen3.5 or GLM-4.7 | Strong Chinese + open weights |

| High-volume, cost-sensitive API | DeepSeek V3.2 | $0.028/M input tokens |

| Long document analysis (>50K tokens) | GLM-4.7 | 200K context, MIT license |

| Tencent / WeCom integration | Hunyuan | Native ecosystem, RAG + agent stack |

| Multimodal + Chinese | ERNIE 4.5 | Text, image, audio, video in one model |

| Air-gapped enterprise deployment | Qwen3.5 or DeepSeek V3 | Open weights + permissive license |

| Reasoning-heavy tasks | DeepSeek R1 or Hunyuan-T1 | Reasoning-optimized variants |

For teams evaluating [Internal link: /tool/deepseek | DeepSeek] for API integration: start with V3.2, not R1. R1 costs $0.55 per million input tokens and is optimized for extended reasoning chains, not standard generation. For teams evaluating [Internal link: /tool/qwen | Qwen]: Qwen3.5 is the current benchmark as of February 2026. The Qwen2.5 generation remains functional but is one generation behind.

1. Your compliance team has not verified data residency.

API calls to Baidu Qianfan and Tencent Cloud are primarily processed in China-based data centers. For data subject to GDPR, HIPAA, or sector-specific residency requirements, verify processing location before routing production workloads. Qwen and DeepSeek have expanding international infrastructure — confirm for your specific endpoint and agreement. [Confirmed as a general data residency principle; endpoint-level verification requires vendor documentation.]

2. Long-form English prose is the primary output.

If your team's output is English-first marketing, editorial writing, or customer communications, these models require more post-editing than GPT-4o or Claude 3.7 Sonnet. The gap is smaller than in 2024, but it is real. Factual Chinese content and technical writing in Chinese — that is where these models earn their place.

3. You need Western enterprise SLAs and certified compliance documentation.

OpenAI, Anthropic, and Google offer enterprise agreements with defined uptime SLAs, SOC 2 and ISO 27001 certifications, and English-language enterprise support at scale. Comparable Western-facing enterprise support from Chinese AI labs is not yet at the same maturity level as of February 2026. [Likely — based on publicly available enterprise documentation.]

Q: Are these models truly open source, or just open weights?

The terms are not equivalent. DeepSeek V3 and GLM-4.7 publish weights under MIT — free for commercial use and fine-tuning. ERNIE 4.5 uses Apache 2.0. Qwen3.5 has a custom license permitting commercial use with additional terms above 100M monthly active users. Training code is not always included. Verify the specific license before production deployment.

Q: When should I use DeepSeek R1 instead of V3?

R1 is optimized for reasoning chains: math, logic, and multi-step problem solving. It costs $0.55 per million input tokens versus $0.028 for V3.2-Exp. Use R1 when the reasoning process is the output. Use V3 for generation, summarization, and translation at scale.

Q: Can these models run on local hardware?

Smaller variants can. Qwen3.5 activates 17B parameters per step — feasible on a multi-GPU setup with A100 or H100s. GLM-4.7 at 358B total parameters needs substantial infrastructure for full-scale serving. ERNIE and Hunyuan sub-7B variants run on consumer hardware with quantization.

Q: Which model produces the best Chinese-language output?

Domain matters. Formal documents and search-style tasks: ERNIE 4.5. Mixed technical Chinese and code: Qwen3.5 or GLM-4.7. Cost-efficient bilingual generation at volume: DeepSeek V3. No single model wins all domains. Check the [Internal link: /category/bilingual-llm | bilingual LLM tools] directory for task-specific comparisons.

Q: Are these APIs accessible outside China?

Qwen, DeepSeek, and GLM-4.7 (via Fireworks and Novita) are accessible globally without restriction. Baidu Qianfan has limited international reach. Tencent Cloud is technically global but requires account setup that is more complex for non-China entities.

Qwen3.5, DeepSeek V3, and GLM-4.7 are production-viable for bilingual workflows today. The benchmark evidence is documented. The open weights are available. The context windows handle most enterprise tasks.

The practical first step is a controlled comparison: take a representative sample of your actual Chinese–English workload and run it through Qwen3.5, DeepSeek V3.2, and GLM-4.7 in parallel. Measure output quality against your team's editing overhead and your real token costs — not toy-scale estimates.

Start with DeepSeek V3.2 for cost benchmarking and Qwen3.5 for quality. The results will likely challenge the assumption that Western-first models are the safe default.

ChatGPT and DeepSeek are two leading free AIs for beginners. This guide compares their features, writing skills, and ease of use to help you choose.

Master advanced prompting techniques 2026 like Chain-of-Thought and Self-Ask to get better results from ChatGPT, Grok, and Gemini.

An accessible overview of the history of artificial intelligence, from early theoretical ideas to modern deep learning.

In 2026, mainstream content creators and new AI adopters have powerful AI video tools at their fingertips.

Discover the most powerful AI productivity tools for 2026, including Gemini, Claude, and top emerging alternatives.

Machine learning vs deep learning explained with clear differences, real world use cases, and guidance for beginners and professionals

Stop collecting AI tools. Start building a system that works like a fractional employee-automate smarter, not harder.

Explore 12 hands-on AI for Students hacks in 2026—from flashcard tutors to auto-lit reviews—to boost focus, save time, and learn smarter

Muck Rack's 2026 journalism survey found 82% of journalists use AI, up from 77%. But concern about unchecked AI rose 8 points to 26%. Here is what the numbers mean for editorial teams.

The News/Media Alliance signed a 50/50 AI licensing deal with Bria covering 2,200 publishers on enterprise RAG queries. The split sounds equitable. Bria controls the attribution algorithm.

The Dallas Fed's February 2026 analysis shows entry-level positions fell 16% in top AI-exposed industries while experienced workers' wages rose 16.7%. The split is structural, not temporary.

ARC-AGI-3 launched March 26, 2026. Every frontier model scored below 1%: Gemini 3.1 Pro Preview led at 0.37%, GPT-5.4 at 0.26%. Here’s what the interactive agentic benchmark reveals about current AI reasoning limits.

Newsquest runs up to 30 AI-drafted stories a day via 30 AI-assisted reporters. Reuters Institute: 67% of publishers haven't saved jobs from AI yet. Here's what the workflow actually looks like.

Most AI users are still doing prompt engineering in 2026. Context engineering — feeding the right information at the right time — is the upgrade your workflow needs. Two copy-paste patterns included.